Prefer a window?

We built that too.

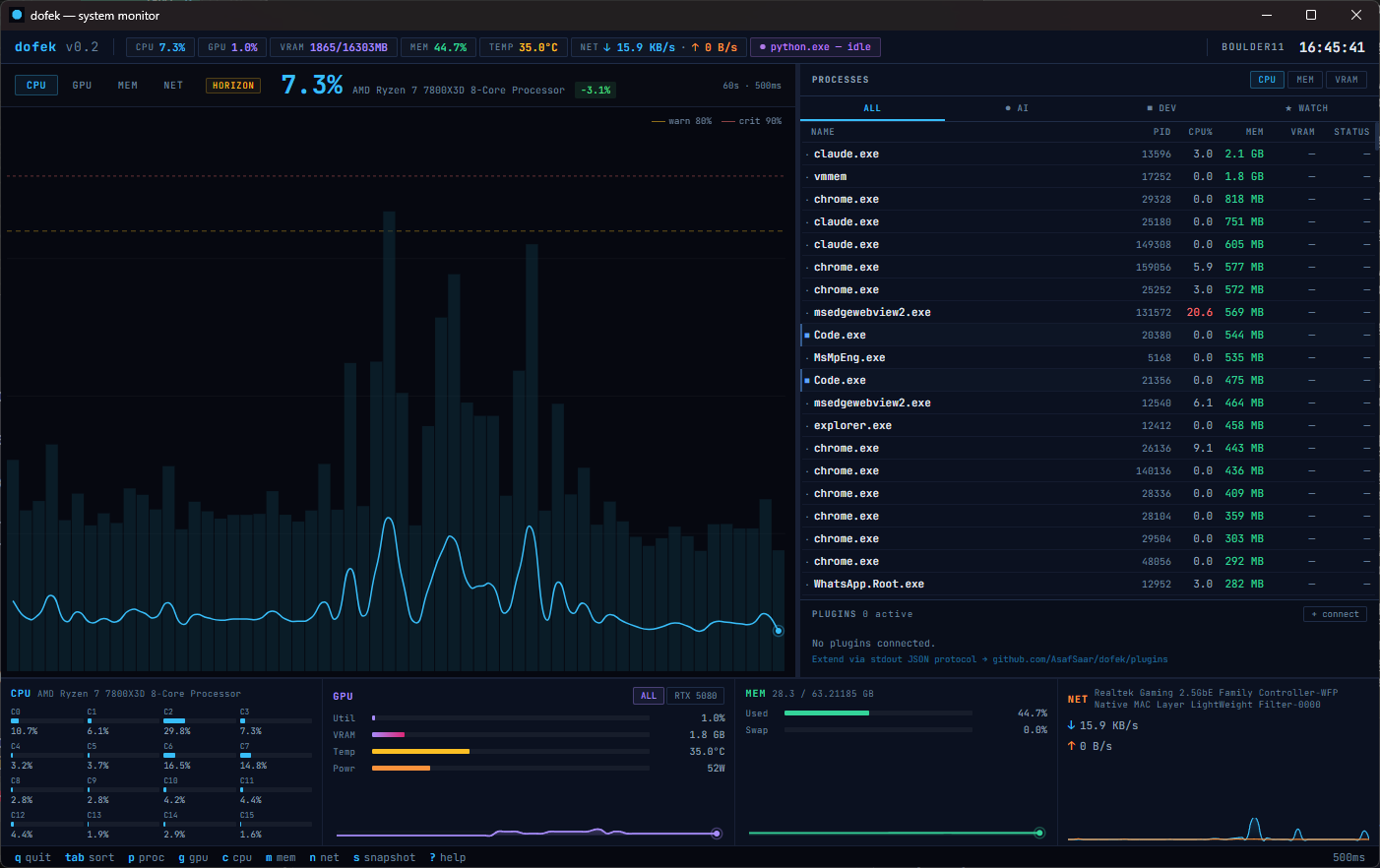

The desktop edition is a Tauri-powered native app with the same real-time data engine and trading-terminal aesthetic. Mouse interaction, resizable panes, and a windowed layout that sits alongside your other tools.

- Resizable chart and watchlist panes via drag handle

- Candlestick and horizon chart modes with tab switching

- Process search, tree view, click-to-select, kill with confirmation

- Full keyboard shortcut support (same bindings as TUI)

- Lightweight — Tauri, not Electron. Small binary, low overhead